Cisco's $28 billion acquisition of Splunk in 2024 changed more than ownership. It changed the renewal calculus for every enterprise security team mid-contract. Roadmap conversations that used to be straightforward now run through an organization whose primary business is network infrastructure, not SIEM development. That ambiguity is triggering re-evaluations that would not have occurred yet.

If your team is evaluating Splunk alternatives, the field looks fundamentally different from the SIEM market of five years ago. Cloud-native platforms, index-free architectures, and agentic AI have all entered the conversation. The question is whether a new platform solves a different problem or the same problem with a different price tag.

An architectural shift from parse-at-ingestion to index-free and data-lake log storage has raised that question sharply. This guide covers the major Splunk competitors across four architectural archetypes, with honest assessments of what each solves and where each stops. The final section makes the case that the evaluation question most teams are asking leaves the most important variable unmeasured.

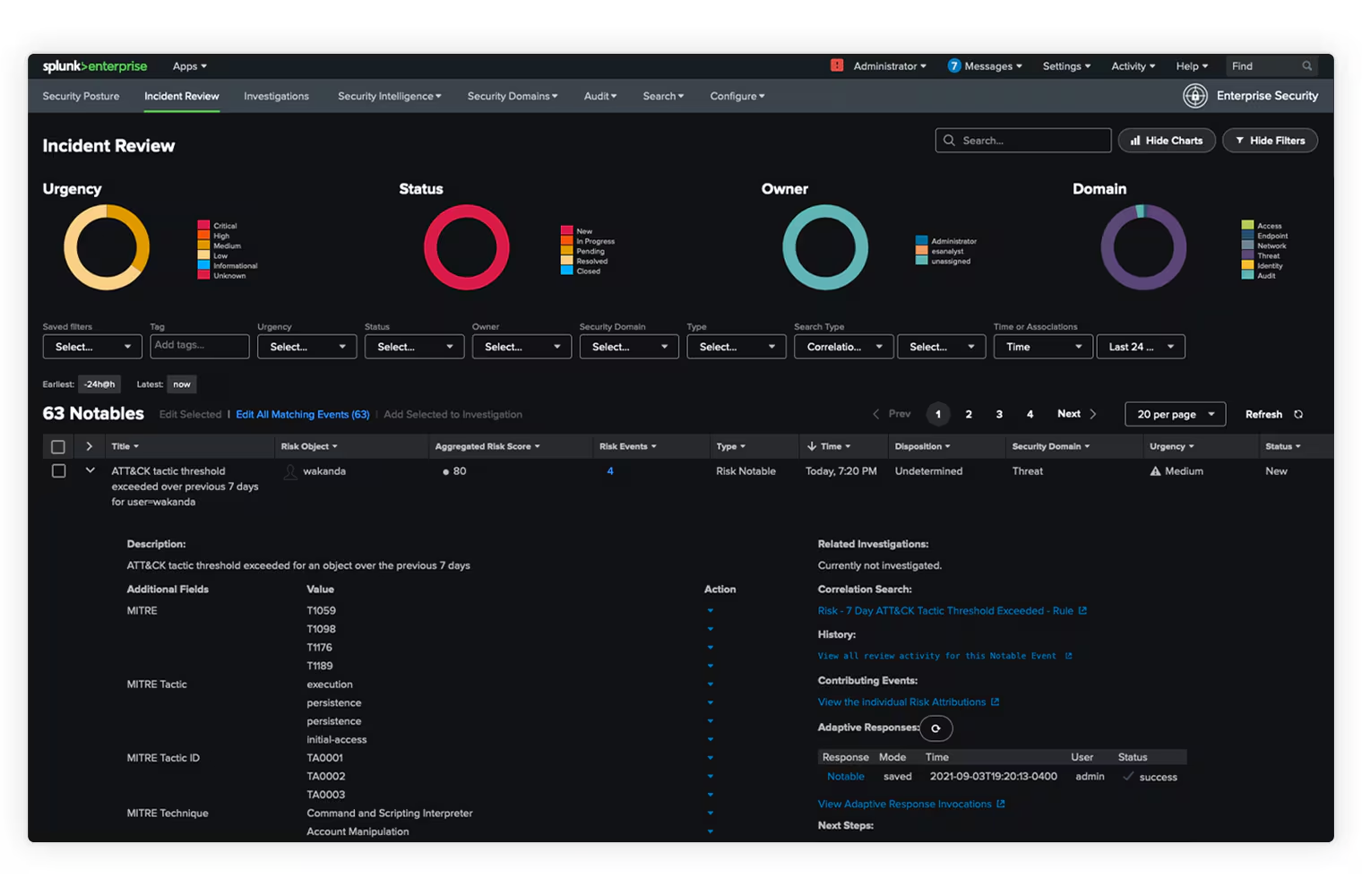

TLDR: Splunk holds 46.78% SIEM market share and earned its eleventh consecutive Gartner Magic Quadrant Leader designation in 2025. The product works. The problem is the ingestion pricing model that forces teams to choose what to monitor based on budget rather than risk, creating the coverage gaps that AI agents inherit. Every alternative reviewed here addresses Splunk's cost or complexity. Only parse-at-query architecture addresses the structural coverage tradeoff that produces those gaps in the first place.

Key Takeaways:

Cisco completed its $28 billion acquisition of Splunk in 2024. Splunk was named a Gartner Magic Quadrant Leader for the eleventh consecutive year in 2025. The product is still respected. But procurement teams are entering renewal conversations without a clear view of where the roadmap goes under Cisco's ownership, particularly as Cisco integrates Splunk capabilities into its broader XDR and networking portfolio. Many organizations are treating the renewal cycle as a de facto re-evaluation. Not because Splunk broke, but because the cost conversation now runs through an organization with different incentives.

The arrival of index-free log architectures has reset the performance and cost expectations that made Splunk's pricing model defensible for years. CrowdStrike's LogScale engine (originally developed as Humio) and Panther's Snowflake-backed security data lake have both demonstrated that fast query performance is achievable without per-byte indexing overhead. This is a fundamentally different architectural premise about when log processing cost should be incurred.

Teams are asking whether they are buying into the same architectural constraints with a lower invoice, or actually changing the cost structure. Most Splunk alternatives evaluations focus on the first question. This guide addresses both.

Splunk's volume-based ingestion model charges by data volume at index time. At 500GB/day, annual costs reach $788,000 or more based on publicly disclosed enterprise procurement data. Small deployments at 1 to 10GB/day run $1,800 to $18,000 per year. At enterprise scale, Splunk becomes one of the largest line items in the security budget, and that number climbs further because Splunk SOAR requires a separate license on top of the base SIEM contract.

The pricing pushes teams the same way every time: ingest the high-priority sources, leave the rest out. IDC found the average enterprise monitors about two-thirds of its environment. That missing third produces:

Not a slower queue. Silence. When an attacker moves through an unmonitored segment, the SOC has nothing to detect it with, nothing to reconstruct the path, and nothing to trace back to patient zero. The investigation starts blind.

This is what happens when parsing has to be decided at ingestion. Cost scales with volume, so teams cap volume. Any alternative built the same way ends up in the same place. The price tag changes. The math doesn't.

So the real question for any Splunk alternative: does it force the same coverage decisions at ingestion, or does it take them off the table?

Cloud-native SIEMs eliminate on-premises server management, reduce per-GB ingestion costs by 30% to 70% compared to Splunk Enterprise at equivalent volumes, and deploy in days rather than weeks. For teams whose primary Splunk frustration is cost and operational complexity, this archetype addresses the immediate pain directly.

Lower costs remove budget pressure. They don't remove the coverage choice. If a team excluded a log source under Splunk because ingestion was cost-prohibitive, a cheaper platform changes the math. It does not change whether the team has the bandwidth to configure, onboard, and maintain that source. Every new log source requires parser configuration, field mapping, alert rule creation, and ongoing maintenance when upstream formats change. Cheaper storage solves the budget constraint. It does not solve the operational one.

For Microsoft-heavy organizations, the per-GB pricing hides a larger advantage. Microsoft 365 audit logs, Azure AD sign-in logs, and Defender alerts flow into Sentinel at no additional cost. For a typical enterprise, these free sources represent 30% to 50% of total log volume before the approximately $2.46/GB pay-as-you-go meter starts, with commitment tiers available at higher volumes.

Microsoft Copilot for Security integration represents the most production-mature AI investigation layer in this archetype. For SOC teams that have seen AI pilots underperform, Sentinel's Copilot integration is worth evaluating as a functional triage accelerator grounded in Microsoft threat intelligence, not a feature checkbox.

KQL (Kusto Query Language) replaces SPL (Splunk Processing Language), which is a real retraining investment for teams with existing Splunk query expertise. Organizations with years of custom SPL-based detection rules, dashboards, and correlation searches face a translation effort that typically takes two to four months for mature deployments. Budget for that transition time explicitly before committing.

Chronicle's unified data model normalizes ingested logs into a common schema at ingestion, enabling cross-source correlation without custom field mapping work. For teams spending analyst time maintaining SPL queries that break when log formats change, the normalized model reduces query maintenance overhead meaningfully. Google's threat intelligence integration (VirusTotal, Google Safe Browsing) surfaces additional context for known-bad indicators without separate enrichment connectors.

YARA-L, Chronicle's query language, is purpose-built for security detection and structured around the MITRE ATT&CK framework. Detection rules map directly to ATT&CK technique IDs, which simplifies coverage reporting for compliance and governance purposes. Chronicle wins for Google Cloud-native organizations or security teams where query maintenance overhead against changing log formats is the dominant cost.

Panther's Snowflake-backed security data lake separates storage from compute entirely, removing per-byte indexing overhead as a cost driver. Detection-as-code using Python and SQL means detection logic lives in version control alongside application code, is deployed through CI/CD pipelines, and is testable before production. For engineering-led security teams that want detection treated as software rather than platform configuration, this is the most technically native architecture in the cloud-native archetype.

The trade is explicit: Panther costs less to operate at scale than Splunk but requires security engineering resources to build and maintain detection-as-code workflows. That means writing Python-based detection functions, managing CI/CD deployment pipelines for detection rules, and debugging query performance against Snowflake's compute model. Teams without a dedicated security engineer will find the operational model harder to sustain than a more opinionated platform.

CrowdStrike Falcon Next-Gen SIEM is built on the LogScale engine, which achieves fast query performance through index-free log storage rather than per-byte indexing. For teams already running Falcon across their endpoint environment, the integration eliminates separate SIEM connector maintenance and routes endpoint, identity, and network detection telemetry directly into the investigation layer without additional configuration.

Charlotte AI provides AI-assisted investigation grounded in Falcon's telemetry layer. Because Charlotte reasons over CrowdStrike's own detection data (process trees, file integrity events, network connections captured by the Falcon agent), its investigation context is more tightly scoped than generic LLM-on-logs approaches. That tight scoping produces more accurate investigation outputs for the coverage area it addresses. CQL (CrowdStrike Query Language) handles structured log queries against this telemetry natively.

The architectural ceiling is specific: Falcon Next-Gen SIEM is excellent for environments where Falcon covers the detection surface. Organizations with non-CrowdStrike endpoints, legacy on-premises infrastructure, or log sources outside the Falcon ecosystem (firewall logs, DNS traffic, SaaS application audit trails, custom application logs) will find coverage gaps at the platform boundaries.

Charlotte AI's investigation quality degrades outside the native Falcon telemetry layer, because external connectors provide less structured context than the agent's own instrumentation produces.

Exabeam and IBM QRadar beat Splunk when detection accuracy is the bottleneck, not ingestion cost. Both lean on UEBA (User and Entity Behavior Analytics) to catch what signature rules and correlation queries miss. They're worth a hard look if you're dealing with:

Exabeam's Smart Timelines stitch user and entity behavior together over time, so an unusual login from a new geography shows up as part of a pattern instead of a one-off alert. Analysts stop triaging individual alerts and start reading behavioral timelines that surface the full attack chain. The tradeoff: you need two to four weeks of historical data before the baselines are reliable, so detection quality ramps up after deployment rather than landing fully formed on day one.

QRadar's strength is connector depth. It covers the legacy stuff newer platforms haven't bothered with: mainframes, industrial control systems, AS/400, proprietary apps no modern SIEM prioritizes. If you're running 15-year-old infrastructure next to cloud workloads, that gap matters.

The catch is the same one Splunk has. UEBA only improves detection over the data it actually sees. Run it across two-thirds of your environment and you get behavioral anomalies in two-thirds of your environment. An attacker who establishes persistence in an unmonitored SaaS app and moves laterally through unmonitored east-west traffic generates nothing in Exabeam's timeline, no matter how good the model is. A smarter detection engine doesn't close a coverage gap at the data layer.

Elastic Security is the strongest option for teams that want full control over their detection infrastructure and can absorb the operational overhead. Originating from the ELK stack, Elastic's cost model is genuinely different from Splunk at scale because Elasticsearch's storage and compute architecture does not impose per-byte indexing overhead. The trade is explicit: Elastic costs less per GB but requires dedicated engineering time for cluster management (node sizing, shard allocation, index lifecycle policies), detection rule maintenance, and parser tuning as log environments change. Teams without at least one engineer who understands Elasticsearch internals will find operational stability harder to maintain than any other platform in this comparison.

Datadog Cloud SIEM fits a specific profile: organizations already running Datadog for application performance monitoring and infrastructure observability that want to consolidate security alerting into an existing platform. Security alerts surface in the same dashboards engineering teams already use, share the same log ingestion pipeline, and benefit from the same correlation infrastructure. Datadog wins when security alerting is part of broader operational monitoring rather than a dedicated SOC function, and when the team values a single vendor relationship over security-specific depth.

Every platform reviewed above addresses real Splunk frustrations. Microsoft Sentinel costs less per GB for Azure shops. CrowdStrike Falcon Next-Gen SIEM integrates more tightly with Falcon telemetry. Elastic Security gives engineering teams more control. Exabeam produces better behavioral detection.

None of them address the question IDC's research surfaces: why does the average enterprise monitor only about two-thirds of its environment, and what architectural change actually closes that gap?

The answer is the ingestion model. Every platform that requires parsing decisions at ingestion time creates the same structural tradeoff Splunk creates: teams ingest what they can afford to parse and exclude the rest. The dollar amount changes. The decision logic does not.

Parse-at-query inverts the ingestion model. Logs are stored in raw form without requiring upfront schema or parsing decisions. Parsing happens at query time, when specific fields are needed for a specific investigation. The coverage tradeoff that creates blind spots in every ingestion-first platform disappears at the architecture level.

This changes forensic investigation fundamentally: when an incident involves a log source that was collected but never parsed, the data is already there. Analysts query it retroactively rather than discovering a gap in the evidence chain. Teams stop choosing what to monitor based on cost and start choosing based on risk.

Strike48's implementation of parse-at-query demonstrates what this looks like in production. Search-in-place connectors query logs directly in S3, Splunk, Elastic, and other existing stores without requiring migration, so the path to complete visibility does not start with a rip-and-replace project.

SOC teams now receive an average of 960 security alerts per day, with 40% going uninvestigated. Enterprise organizations with 30 or more integrated security tools face over 4,400 daily alerts. Every Splunk competitor reviewed in this guide has shipped or announced AI-assisted triage, natural language querying, or automated investigation. The common limitation: AI that reasons over incomplete log data produces confident analysis of the part it can see and silence about the part it cannot.

The problem is knowledge scope, not model quality. An agent given access to two-thirds of an environment produces investigation outputs grounded in that two-thirds. The excluded sources generate nothing: no alerts, no baselines, no artifacts.

One architectural response to this is micro-agent design. Rather than deploying a monolithic AI asked to investigate everything, break work into narrowly scoped agents, each responsible for a specific task. A coordinator agent receives an alert and splits it into defined jobs: check these IPs, pull this user's authentication history, run this behavioral baseline. Specialist agents handle each task with a constrained knowledge graph and tool set. Strike48 uses this approach with GraphRAG persona graphs and MCP tool constraints to keep each agent's reasoning anchored to relevant context. Agents given small, specific jobs don't hallucinate to please you. In early deployments, this architecture achieved mean time to detection below eight minutes.

See What Is Agentic Log Intelligence? for the full framework.

The TCO calculation matters more than per-GB pricing. Per-GB ingestion cost is a small fraction of total SIEM cost of ownership for most enterprises. Research from guptadeepak.com quantifies 200 daily alerts at 15 minutes each as $300,000 to $500,000 per year in analyst labor. A platform that reduces confirmed investigation workload by 50% through agent-driven triage may cost more per GB and save more in total. Any evaluation that optimizes ingestion cost without modeling analyst time is measuring the wrong variable.

Run a pre-commitment coverage audit. Before finalizing any Splunk alternative, determine what percentage of current log sources are monitored and whether the evaluation criteria include a path to closing that gap. A cheaper SIEM with the same coverage exclusions does not reduce risk. It reduces the invoice.

Most Splunk alternatives deliver what they promise: lower cost, simpler deployment, or better detection quality over the infrastructure you are already monitoring. The question most evaluations skip is whether the infrastructure you are monitoring is complete. If the answer is no, the AI augmentation every platform offers inherits the same blind spots your current SIEM has.

The intelligence is already in your logs.

See what complete visibility and purpose-built agents look like. Request a demo.

Q: Is Splunk still the best SIEM despite its cost and acquisition uncertainty? A: For some environments, yes. Splunk Enterprise Security remains the most mature SIEM in integration breadth, SPL-powered query flexibility, and enterprise deployment experience. Switching costs are high. Years of custom SPL correlation searches, dashboards, and analyst expertise do not port directly to KQL or YARA-L. That retraining typically takes two to four months for a mature deployment. The Cisco acquisition introduces roadmap uncertainty, not a broken product. Teams with strong Splunk ROI should engage Cisco directly on the roadmap question and build a cost comparison that includes migration overhead before committing to an alternative.

Q: What are the cheapest Splunk alternatives when you factor in analyst labor? A: Per-GB ingestion cost is the wrong optimization target. At 200 daily alerts requiring 15 minutes each, analyst labor costs $300,000 to $500,000 per year, dwarfing ingestion fees at any platform. Microsoft Sentinel and Elastic Security typically win on per-GB cost. But if either platform produces the same alert volume as Splunk, analyst time costs remain unchanged. The cheapest total-cost platform is the one that reduces confirmed investigation workload, not just storage overhead. Strike48's agent-driven triage automates Tier 1 investigation entirely, which changes the labor cost equation at the architecture level rather than shifting it between line items.

Q: What is the difference between a traditional SIEM and an agentic log intelligence platform? A: A traditional SIEM ingests, indexes, and surfaces alerts for human analysts to triage and investigate. An agentic log intelligence platform like Strike48 combines complete log coverage through parse-at-query architecture with autonomous AI agents that run investigations end to end. Traditional SIEMs require human triage at each stage. Agentic platforms run investigation workflows autonomously and escalate only confirmed or high-confidence threats, with human-in-the-loop approval gates for critical actions like endpoint isolation. The prerequisite is complete data, because AI agents that fire against incomplete log coverage produce faster wrong answers about what they can see.

Q: How long does it take to migrate from Splunk to an alternative? A: Migration timelines vary by architecture. Cloud-native SIEMs (Sentinel, Chronicle) typically deploy in days to weeks, though re-creating custom SPL detection logic in a new query language adds two to four months for mature deployments. Behavioral analytics platforms (Exabeam, QRadar) require weeks to months to build behavioral baselines from historical data. Strike48's search-in-place connectors take a different approach: they query your existing Splunk data where it already lives, so the path to visibility is minutes to go-live with no forced data movement. Smart collection covers approximately 80% of log sources in under a day for centralized ingestion. Strike48 does not require you to abandon your Splunk investment to gain complete coverage.

Q: What happens when your SIEM's AI encounters a log source it was not trained on? A: Most SIEM AI features depend on pre-defined parsers and schemas. When a new or unrecognized log format arrives, the AI either ignores it or produces unreliable analysis. This is a common failure mode during incident investigations, when the critical evidence is often in a log source the team had not prioritized. Strike48's auto-generated parsers address this by creating parsing logic for new log sources automatically. Agents can also read semi-structured logs directly when needed, so visibility does not wait for parser development. The architectural goal is that no log source is invisible to the platform, even before a formal parser exists.