Adversaries are already using agents. Defenders mostly aren't.

That's the story underneath the data we collected from 100 cybersecurity leaders for our new report, The State of Agentic Security 2026: Breaking Through the Trust Barrier. The survey set out to answer a simple question: where do security leaders actually stand on AI agents in the SOC, and what's holding them back?

84% of the security leaders we surveyed agree that AI agents should be doing L1 SOC work. Only 22% are ready to fully automate it. That 62-point gap between agreement and deployment is the defining tension of agentic security in 2026, and it points at something bigger than cybersecurity. This is the first real stress test of whether enterprises will deploy the agentic AI they say they want.

Adoption is happening, but slowly. 36% of respondents have AI agents running in production for at least one use case. Another 34% have run a pilot or POC. 46% are still researching and evaluating options. The intention is there. The deployment isn't catching up.

So what's holding leaders back? Two things, compounding on each other: trust and data visibility.

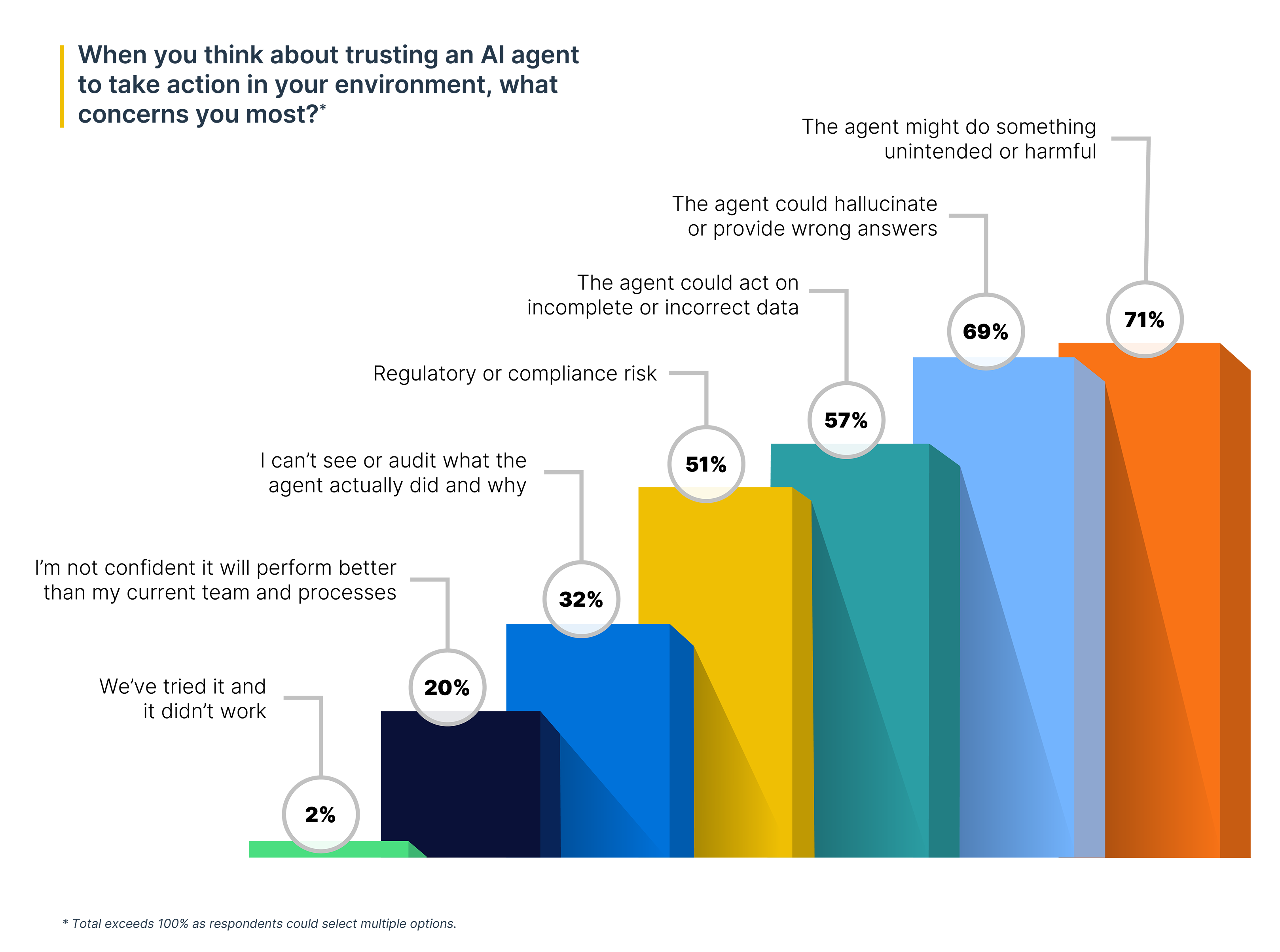

When we asked security leaders why they haven't deployed agents more broadly, trust topped the list. 52% said they don't trust the outputs enough to let agents act autonomously. But trust isn't a single concern.

64% of respondents flagged three or more trust issues simultaneously. The top three:

Read those three together and the pattern becomes clear. Security leaders aren't worried about a single failure mode. They're worried about a compounding one: an agent that acts on incomplete data, hallucinates a conclusion from it, and takes an unintended action as a result. Each concern amplifies the others. That's why solving for trust requires more than fixing one thing.

There's a second finding in the report that, on its own, looks like a different story. However, when considered alongside the trust findings, the connection becomes clear.

84% of security leaders told us their current tools cannot access all their log data for investigations. 65% have had at least one investigation stall because data was trapped in a system their tools couldn't reach. 80% cite the cost of keeping data live and searchable as painful or a major budget concern.

Now look at that next to the trust data. 57% of leaders worry agents will act on incomplete data. 84% admit their tools can't access all their data. Those aren't separate findings. They're the same problem viewed from two angles. An AI agent built on top of incomplete data infrastructure inherits every blind spot that infrastructure already has, and security leaders know it.

Solving for agent capability without solving for data access leaves a gap that the rest of the trust mechanisms can't fully close.

The SANS Institute and Cloud Security Alliance recently issued an emergency strategy briefing warning that defenders who haven't adopted AI agents face "a widening capability gap against AI-augmented adversaries, regardless of their existing technical skill." Their first-priority recommendation was unambiguous: introduce AI agents to the cyber workforce.

But the data in our report shows leaders aren't waiting for vision. They're waiting for proof. When we asked what would make them seriously evaluate an agentic security platform in the next 90 days, the most common answer wasn't a category definition or a thought leadership pitch. It was proven results: demos, POCs, and real-world validation on the security team's own data.

Security teams will never accept unpredictable “black box” agents.

Trusting security agents requires:

These attributes set the stage to enable security teams to trust agents.

The full report includes our recommendations for security teams looking to close the gap between agreeing with agentic security and actually deploying it. The short version:

1. Layer AI agents into your existing stack. Rip-and-replace migration takes months and adds risk. An agentic solution that works with your current tools can be connected in days.

2. Make sure your agents have access to complete data. Rather than go through the time consuming and costly process of consolidating your data into a single store, deploy an agentic layer that can access and query that data in their existing environments. Agents stuck in a data silo make decisions on a partial picture.

3. Start training L1 analysts to be agentic managers. As agents automate L1 tasks, transition your existing L1s to oversee and validate those agentic workflows. Look for tools with customizable human-in-the-loop controls so you can give agents more autonomy as they earn trust.

There isn't time to wait for the perfect agentic solution. The gap between adversary capability and security team capability is widening every day. Security teams who layer in agents now and let them build trust will be the ones who can successfully defend against AI-enabled threat vectors.

Get the full report: The State of Agentic Security 2026: Breaking Through the Trust Barrier.